The Eval Step Founders Skip

Before choosing AI or rules-based architecture in a regulated product, you need an eval set. Most founders skip this step. In regulated industries, that's a compliance exposure.

This is Nolte’s perspective, drawn from working hands-on with founders building software products in regulated industries.

There’s a question most founders building in healthcare, insurance, and fintech aren’t asking before they choose their architecture. It’s not a technical question. It’s a definitional one:

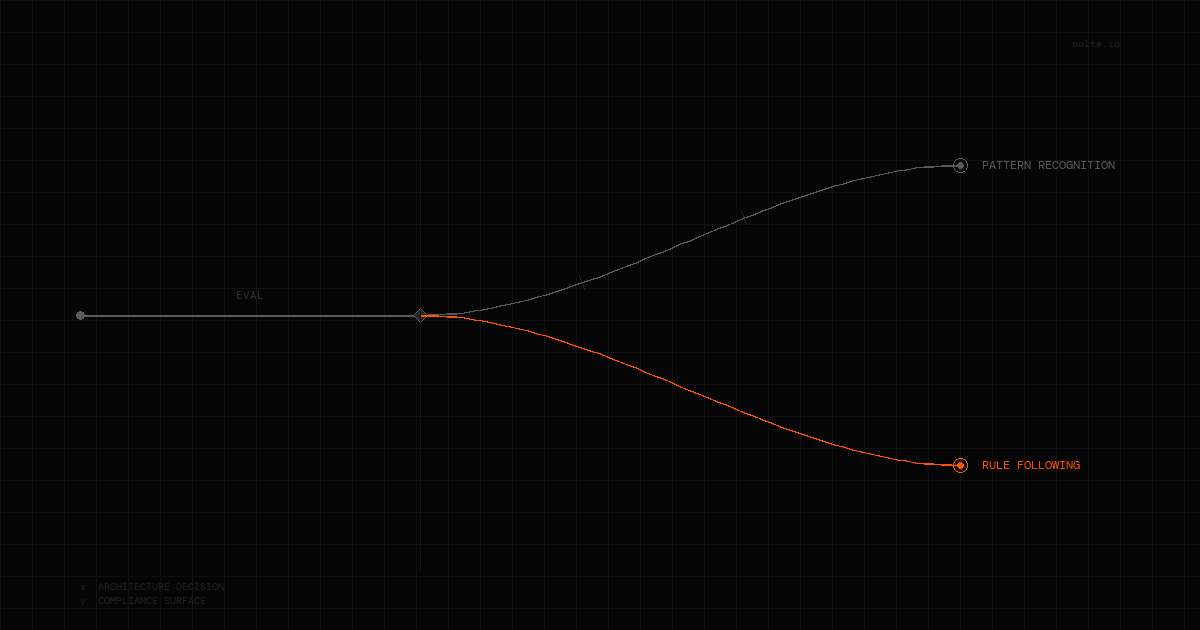

Is this a pattern-recognition problem or a rule-following problem?

The answer determines almost everything — which approach to use, how to test it, and how to know whether it’s actually working. Skipping it is the most common mistake we see in this space. And regulated industries are exactly where skipping it hurts most.

Why do regulated industries have defined correct outputs?

That’s not a limitation — it’s an asset most founders treat as a nuisance. A claim either qualifies or it doesn’t. An ICD code is either accurate or it isn’t. A disclosure is either compliant or it isn’t. The correct answer exists. It’s codified. In many cases, it’s been litigated.

This is the opposite of what AI is optimized for. Large language models are built for problems where correct is fuzzy — summarization, generation, synthesis across ambiguous inputs. Their probabilistic architecture produces outputs distributed across a likelihood space. That’s a strength when the answer is subjective. It’s a liability when the answer is defined by statute.

We’ve tested this directly. Working with a healthcare founder building a compliance-adjacent product, we ran AI outputs against known-correct results. We tested across multiple models. The deterministic, rules-based approach matched or outperformed AI on compliance tasks consistently — not because AI is bad, but because the task was rule-following, not pattern recognition. The model was being asked to do something a decision tree does better.

Why does the failure come from a missing evaluation, not the technology?

Founders reach for AI because it feels like the right move for a modern product. That instinct isn’t wrong in general. It’s wrong when it replaces the prior question. Before you commit to any architecture in a regulated context, you need an eval set — a defined set of inputs with known-correct outputs — and you need to run your approach against it before you ship anything.

Most founders don’t do this. They build the product, demo it under favorable conditions, and find out about the edge cases when volume surfaces them. In consumer software, that’s a product quality problem. In regulated industries, it’s a compliance exposure. The audit doesn’t care that the system worked 94% of the time. It cares about the 6%.

The regulated industry context is actually an advantage here that almost no one uses. You have ground truth. The correct answer is often documented, sometimes legally defined. You can build a rigorous eval before you write production code. The founders who do this — who define correct before choosing architecture — make better technology decisions and ship more defensible products.

Where does AI win in regulated industries?

This isn’t an argument against AI in regulated spaces. There are tasks where probabilistic models are clearly the right tool: predicting claim likelihood, identifying fraud patterns, surfacing clinical anomalies at scale, extracting structure from unstructured clinical notes. These are pattern-recognition problems. AI is built for them.

The distinction is precise: AI on prediction tasks, rules on compliance tasks. The mistake is blurring that line because building with AI feels like the more ambitious choice. In a regulated context, the ambitious choice is the one that survives an audit.

What should founders do with this?

Before you choose an architecture for any core feature in a regulated product:

Define what correct looks like. If you can’t write down what the right output is for a given input, you’re not ready to build. If you can, you’re ready to evaluate — and evaluation should happen before development, not after.

Then test both approaches against that definition. Not in a demo. Not with cherry-picked inputs. With a representative set of real cases including edge cases, especially the ones that would create liability if they failed.

The answer will tell you which architecture to use. Sometimes it’s AI. Often, for compliance-critical paths, it isn’t.

The founders getting this right aren’t more technical than the ones getting it wrong. They’re just asking the question earlier.

Related: The Predictability Gap — why most delivery models fail in regulated industries, and what replaces them.