The Forecasting Gap

Every business function forecasts. Software still guesses. That needs to change.

TL;DR: Finance forecasts revenue. Sales forecasts pipeline. Operations forecasts capacity. Software development asks you to fund it and hope. It’s the only business function where leadership routinely approves spend without a reliable forecast of what it will produce, when, and at what cost. AI didn’t close this gap. It made it wider. The firms that close it with forecast-based delivery will define the next era of the industry.

What a Closed Gap Looks Like

Dr. Josh Turnbull wanted to reinvent dental care. He had the clinical vision but no technical background, and a product that needed HIPAA-compliant infrastructure, integration with existing clinical workflows, a web booking system, and native mobile apps on iOS and Android.

We spent the first month on strategy and discovery: user interviews with patients, clinical workflow mapping, business viability analysis. Several features that seemed obvious at the outset got cut. Not because we couldn’t build them, but because the research showed they wouldn’t move the business. That decision protected Josh’s capital before a line of code was written.

Then we forecasted. Based on years of delivery data, we committed to a scope, a timeline, and a price. Not a range. A commitment: these deliveries, this cost, this date.

Four months from kickoff to launch. On time. On budget. To the dollar.

Dr. Josh: “They can build anything and do so beautifully and on time. They have also helped me tremendously in thinking strategically, even if it means I’m paying them less in the short term. They act like a partner in my business.”

Even if it means I’m paying them less. That sentence is the difference between estimation and forecasting. When a firm forecasts accurately and prices on delivery, protecting the client’s balance sheet isn’t charity. It’s the business model. Cutting scope that doesn’t serve the business makes the forecast tighter and the delivery faster. The incentives align by design.

Today, Breeze releases 3-5 new features per month, each one forecasted, priced, and delivered predictably. Josh plans his development investment the way he plans his lease, his payroll, and his equipment: as a known line item, not a variable expense. Full case study at nolte.io/work/breeze-case-study

The Gap Nobody Named

A CFO can forecast revenue twelve months out and be within single digits. Sales can forecast pipeline accurately enough to hire against it. Operations can forecast capacity well enough to sign supply contracts.

Ask a VP of Engineering what the next quarter of development will cost and deliver. The answer is almost always a hedge: it depends.

Software development is the only business function that asks leadership to fund it without a reliable forecast of what it will produce.

This isn’t just an engineering problem. It creates a cascading failure across every function that depends on engineering output:

The CFO can’t plan. IT projects run 45% over budget on average while delivering 56% less value than predicted. McKinsey and the University of Oxford studied over 5,400 IT projects and found a total cost overrun of $66 billion, more than the GDP of Luxembourg. 70% of software projects exceed their initial budget. Every additional year spent on a project increases cost overruns by 15%, often driven by compounding technical debt. For the CFO, software development isn’t a line item. It’s a moving target that destabilizes quarterly financial planning. In an era where boards demand capital discipline, engineering remains the one function where the finance team is asked to approve spend without a reliable forecast of what it will produce.

The CEO can’t answer the board. In most companies, no executive is explicitly responsible for ensuring that what engineering delivers actually aligns with business goals. When the board asks “what did our engineering spend produce last quarter?” the CTO counters with DORA metrics, uptime percentages, and deployment frequency. None of those translate to a bottom-line revenue number. The inability to demonstrate engineering ROI in business terms isn’t the CTO’s personal failure. It’s a system that was never built to produce that answer.

The CTO can’t forecast their own function. Jellyfish analyzed over 25,000 deliverables across 3,600 teams and found that only 24% were delivered on time. Just 9% of teams delivered consistently on time. The unpredictability radiates outward: marketing can’t plan campaigns around feature launches, sales can’t sell roadmap items with confidence, and customer success can’t set realistic expectations. Developers working in unpredictable environments are more prone to burnout, produce lower-quality code under unrealistic deadlines, and leave, compounding the problem further.

The product leader can’t prioritize. Without a forecast, every feature request is evaluated on gut feel rather than financial tradeoff. “If we build X, we don’t build Y this quarter” is invisible because neither X nor Y has a forecasted cost, timeline, or expected business impact attached to it. The model says yes to everything without surfacing what that yes actually costs.

Every other business function closed its forecasting gap decades ago. Software hasn’t. And the reason isn’t complexity. It’s that the prevailing model doesn’t require it.

Why the Gap Persists

The gap persists because the dominant business model profits from it.

When agencies bill by the hour, there is no financial incentive to forecast accurately. The longer work takes, the more the agency earns. Scope changes are upside. Rework is billable. An accurate forecast would expose how often the model fails the client, so the model avoids forecasting entirely and relies on estimates instead.

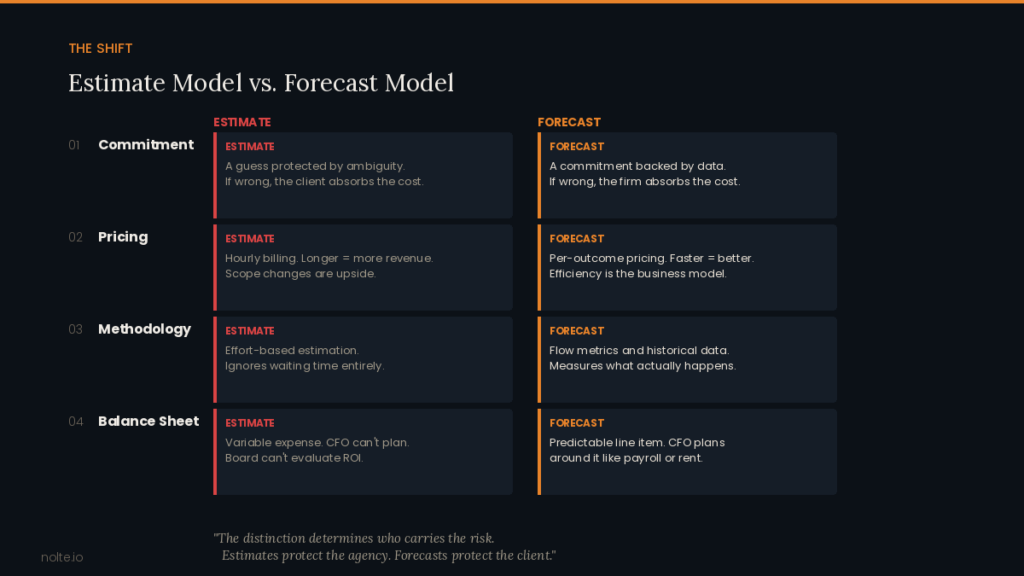

An estimate is not a forecast. A forecast is a commitment backed by data. It says, based on what we’ve measured, here’s what we expect to deliver, when, and at what cost. An estimate is a guess protected by ambiguity. It says, we think it’ll be roughly this, but if we’re wrong, that’s just the nature of the work.

The distinction matters because it determines who carries the risk. In the estimate model, the client absorbs all of it: they pay whether it ships or not, on time or not, functional or not. The agency carries none. Forecast-based delivery inverts this: the firm commits to a forecast and absorbs the cost when it misses. That structural difference changes every decision the firm makes. (For a deeper look at why we stopped estimating entirely, see Why We Don’t Estimate (And How We Still Predict Delivery).)

I’ve Been on the Wrong Side of This

I didn’t build a forecasting system because I had a vision. I built it because I failed a client and a fifty-year-old law explained why.

Early in Nolte’s history, our biggest client was an early-stage startup burning venture capital to build their product. They kept sharing new requests. We kept saying yes. The scope grew. The retainer grew. So we did what seemed logical: we added headcount, expecting proportional impact.

Throughput grew, but nowhere near proportionally to the investment. Double the people did not mean double the output. And predictability got worse, not better.

Brooks’s Law, from Fred Brooks’s The Mythical Man-Month, published in 1975, explains exactly why: adding people to a software project increases communication overhead quadratically. Every person you add creates new coordination paths, new handoffs, new onboarding cost. The gains from additional capacity get eaten by the system’s complexity. More context switching, more meetings, more dependencies, slower decisions.

Every company that has tried to scale engineering by adding headcount without redesigning how the work flows has hit this wall. Startups do it internally. Enterprises do it with contractors. Agencies do it with retainers. Brooks wrote about it fifty years ago. The industry is still making the same mistake.

The result: the client was burning cash at an accelerating rate and I couldn’t answer the most basic question: what are you getting for this money and when will it be done?

That’s the moment I knew the model was broken. Not theoretically. I was running the firm that couldn’t give its own client a forecast. Every additional hour we billed made the problem worse, not better.

It took years to build what is becoming NolteOS, but the commitment started with that failure.

AI Didn’t Close the Gap. It Made It Wider.

This is the part most people get wrong.

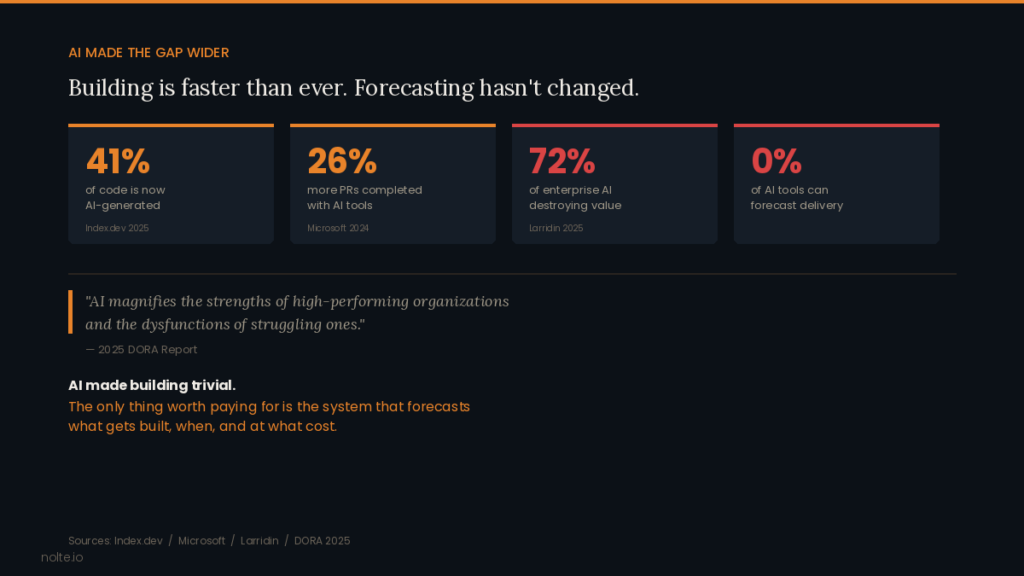

AI can write code, check its own logic, catch bugs, and generate features. 41% of all code is now AI-generated or assisted. Microsoft’s study of 5,000 developers showed a 26% increase in completed pull requests with AI tools. Building software has never been faster or cheaper.

But building was never the gap. Forecasting was. And AI made the forecasting gap worse, not better.

Here’s why: delivery is not development. AI accelerated development, the writing of code. But delivery is everything around it: defining requirements, making decisions, managing dependencies, ensuring production readiness, measuring business impact. AI left all of that untouched. Organizations can now generate code faster than they can define requirements, validate alignment, ensure production readiness, and measure outcomes. The bottleneck shifted from “can we build it?” to “should we build it, when will it ship, what will it cost, and will it matter?”

AI tools can generate a feature. They cannot tell you whether that feature is the right thing to build, whether it aligns with the business objective, not just one person’s assumption. They cannot handle the production-grade context (infrastructure, monitoring, test coverage, deployment) that separates a demo from a product. They cannot replace the organizational judgment required to protect a client’s capital by saying “don’t build this.” And they cannot forecast: they have no model for predicting when a set of deliverables will ship at what cost with what confidence level.

AI makes building trivial. That means the only thing worth paying for is the system that determines what gets built, forecasts when it’ll be done, and ensures every dollar spent creates business value.

The 2025 DORA Report confirmed it: AI magnifies the strengths of high-performing organizations and the dysfunctions of struggling ones. Firms with forecasting systems get turbocharged. Firms without them produce more code, faster, with less predictability than ever.

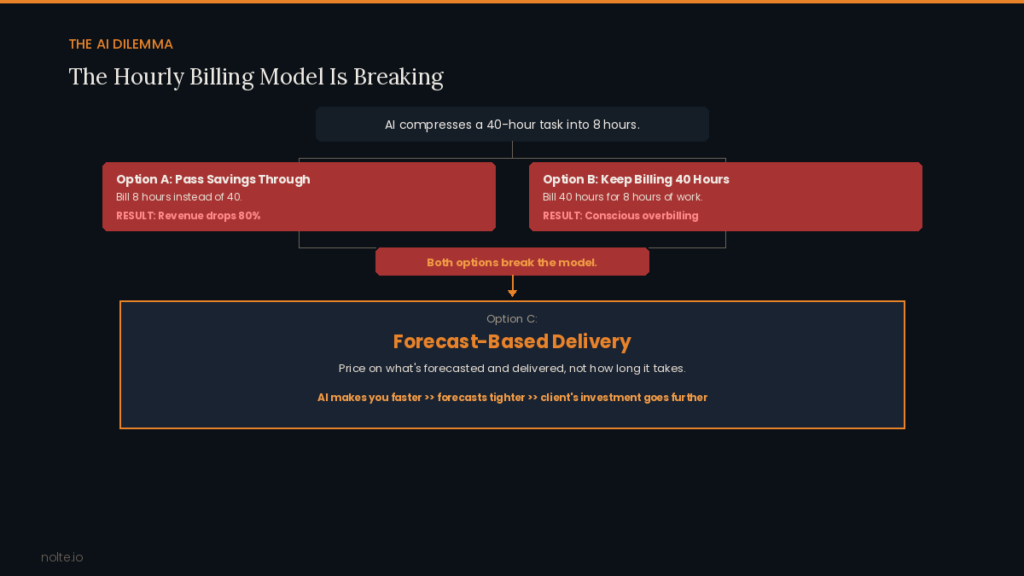

When AI compresses a 40-hour task into 8, hourly-billing firms face an impossible choice: cut revenue by 80% or consciously overbill. That choice only exists when you sell time. Forecast-based delivery eliminates it entirely: AI makes you faster, which makes your forecasts more efficient, which makes the client’s investment go further. The incentives align.

Meanwhile, 72% of enterprise AI investments are already destroying value through waste, and boards are demanding ROI proof rather than adoption metrics. The accountability era has arrived, and accountability requires forecasting.

Closing the Gap

The methodology for forecasting software delivery exists. Flow metrics (cycle time, throughput, work in progress) have been used by high-performing teams for years. Sonya Siderova and her team at Nave have demonstrated that probabilistic forecasting based on historical flow data produces dramatically more reliable commitments than effort-based estimation. Their core insight influenced how we think about delivery: estimation based on effort is fundamentally unreliable because waiting time represents the vast majority of delivery time. No estimation framework accounts for that. Flow metrics do.

But measurement is not transformation. Knowing your cycle time is useful. Restructuring your entire business model around it (pricing, scoping, risk allocation, client relationships) is a different thing entirely.

That’s what NolteOS does. It’s not a dashboard. It’s a forecasting engine that determines what we commit to, what we charge, and what happens when we miss. Little’s Law, the mathematical foundation of flow-based delivery, states that average items in a system equals arrival rate multiplied by average time per item. When you manage work in progress, cycle time stabilizes. When cycle time stabilizes, throughput becomes consistent. When throughput is consistent, you can forecast. NolteOS converts years of this data into forward-looking commitments the same way a financial model converts historical revenue into projections.

Our delivery prediction accuracy over three years is 95%. Not because we guess better, but because we built an operational system where forecasting accuracy is the metric that everything else serves. When we miss (and the 5% exists) the cost is ours, not the client’s. That’s what makes it a forecast and not an estimate: the firm that makes the commitment bears the risk of missing it.

Where the Gap Is Closable

An honest caveat: this model isn’t for everyone.

Too early (pre-seed, seed, pre-revenue) and there’s nothing to forecast against. The company is still figuring out what to build. The right model at that stage is speed, experimentation, and learning. Forecasting is premature when the product itself is undefined.

Too late (enterprise scale, post-IPO, thousands of engineers) and the company should be building its own internal forecasting infrastructure. At Uber’s scale, entire teams are dedicated to engineering productivity metrics. They don’t need an outside firm to forecast for them. And at that size, the problem isn’t development speed. It’s decision-making, dependencies, and organizational politics. Forecasting doesn’t fix those from the outside.

The window is the middle. Series A through D. Companies with 20 to 200 employees that have real engineering spend, real accountability to investors or a board, and no system connecting the two. They’re big enough that someone (a CFO, a board member, a lead investor) is asking hard questions about engineering ROI. They’re scaling fast enough that the old “just hire more engineers” playbook is hitting the Brooks’s Law wall. And they’re small enough that delivery still means development. Decisions can be made quickly, and a forecasting system creates immediate, visible impact.

These are companies where the CEO just got the board question for the first time: “what did we get for that $2M in engineering spend last quarter?” And nobody in the room had a good answer. Or they’re 30-person companies where the founder is writing checks for development and has no way to predict what those checks will produce next quarter. They have two options: build an internal forecasting system from scratch (which takes years and a leadership hire they may not be ready for) or work with a firm that already has one.

That’s where we operate. Not because we can’t work outside that window. Because that’s where the gap is widest, the pain is sharpest, and the forecast-based delivery model creates the most value.

What Changes When the Gap Closes

When software delivery becomes forecastable, everything downstream changes:

For the CFO: Development spend becomes a predictable line item. You can plan around it, budget against it, and report on it the same way you report on every other function. No more quarter-end surprises from engineering overruns. No more approving spend without a forecast of what it will produce.

For the CEO: You can answer the board. You know what the development investment produced, what it cost, and what’s coming next quarter. Engineering becomes a strategic asset you can point to, not a cost center you defend.

For the product leader: Every potential feature has a forecasted cost and timeline before you commit. Prioritization becomes a financial conversation, not a gut call. “If we build X, we don’t build Y this quarter” is visible in real numbers, and the decision is made before the money is spent, not after.

For the CTO: You stop managing by heroics and start managing by system. The team’s performance is measured by forecast accuracy and throughput, metrics you can improve systematically, not by how many hours they logged or how many sprints they completed.

For the balance sheet: Every decision the firm makes (scope, architecture, team composition, tool selection) is filtered through “does this serve the business?” When a firm only profits from delivery efficiency, protecting the client’s capital is the strategy, not the exception.

20 Years, Three Waves

I founded Nolte in 2006. Three waves of disruption have hit this industry since: offshore outsourcing, no-code platforms, and AI-assisted development. Each one made building easier. None closed the forecasting gap. (Here’s what those waves actually taught us.)

Today we deliver 75 forecasted deliverables per month, targeting 150-200 by year end. Every engagement is forecast-based: priced per delivery, not per hour, not per retainer. And every decision we make is governed by a single principle: technology in service of the business.

Your development budget should be a forecast, not a gamble. Your roadmap should be a plan you can staff against, invest around, and present to your board. Software delivery should be as boring and as predictable as every other business function your company depends on.

The forecasting gap is closable. We’ve closed it. The firms that define the next era of this industry will be the ones that close it too.

One Question

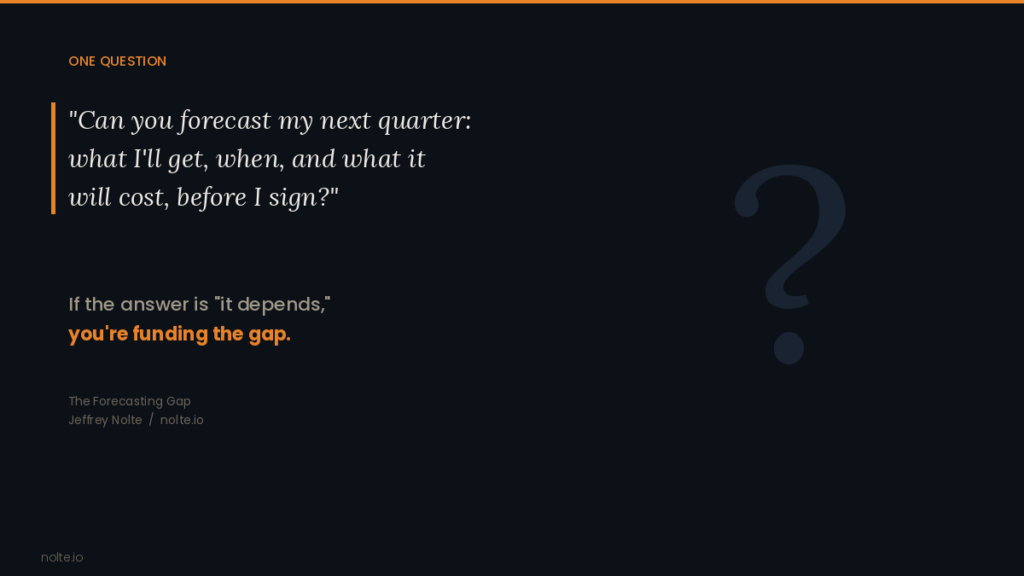

Ask your development partner:

Can you forecast my next quarter: what I’ll get, when, and what it will cost, before I sign?

If the answer is “it depends,” you’re not getting a forecast. You’re funding the gap.

If you want to see the forecasting model rather than read about it, book a call. If you run an agency and want to close the gap, reach out. The system is hard-won but not secret. This industry gets better when more firms make software delivery forecastable.